The basic operations in Minitab will be similar whether you're dividing the data into two samples for training and validation or into 3 samples for fitting, validation, and testing. If you do explicitly set both, they must add up to 1. The goal is usually to use part of the data to develop a model and part of the data to test the prediction quality of the model. Either train_size or test_size needs to be set, but both are not necessary.

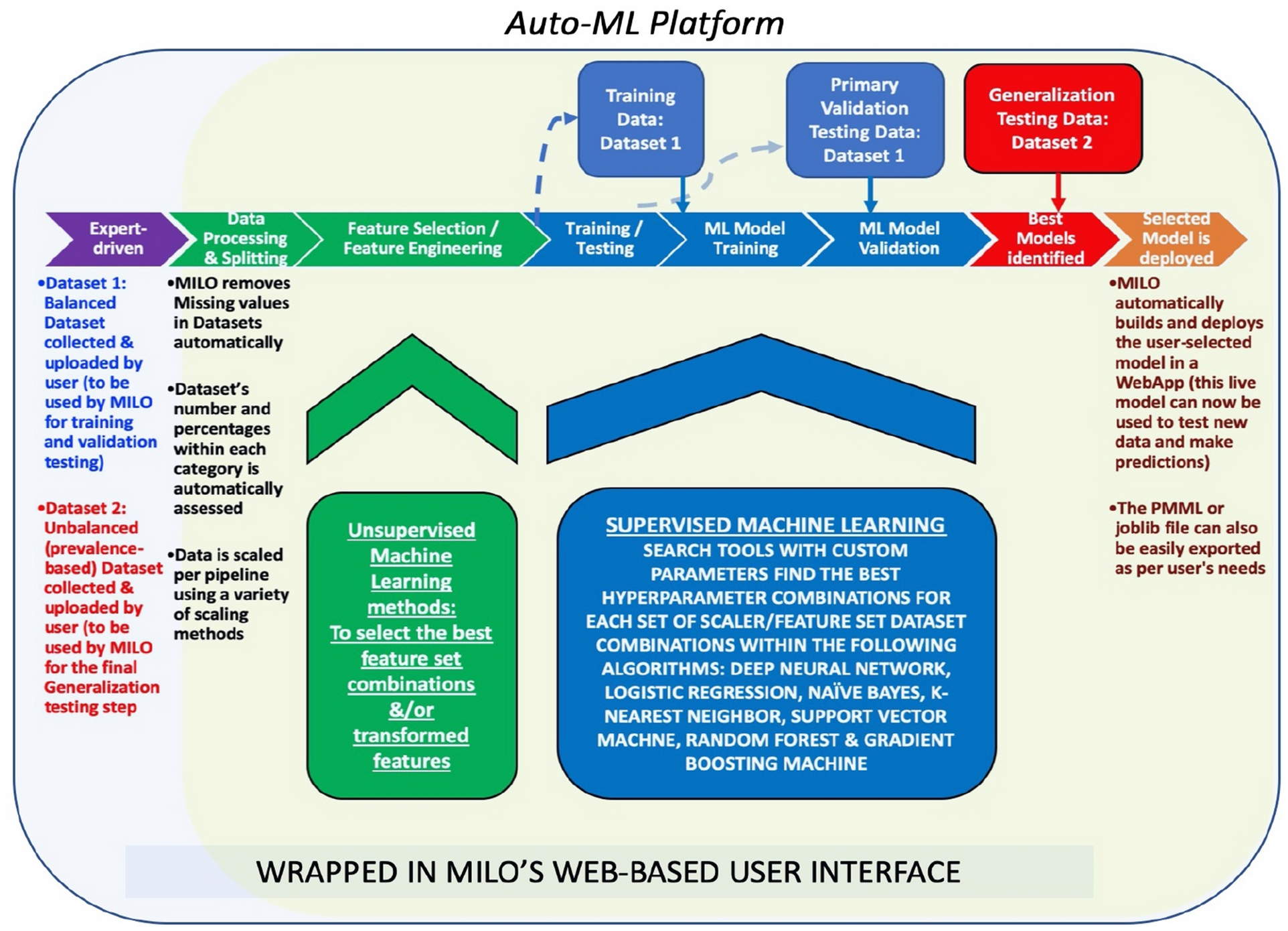

80 of the data will be used for training the model while 20 will be used for. Here I have used the ‘ t raintestsplit’ to split the data in 80:20 ratio i.e. (See below for more comments on these ratios. Common ratios used are: 70 train, 15 val, 15 test. iris loadiris() Which I then use to store the data and target value into two separate variables. At the beginning of a project, a data scientist divides up all the examples into three subsets: the training set, the validation set, and the test set. You should set a random_state for reproducibility. Then I load the iris dataset into a variable. The test data set is used for a final assessment of the chosen model. The validation data is used to help prevent a modeling node from overfitting the training data (model fine-tuning), and to compare prediction models.

To do so, both the feature and target vectors ( X and y) must be passed to the module. The hold-out sample itself is often split into two parts: validation data and test data. Thankfully, the train_test_split module automatically shuffles data first by default (you can override this by setting the shuffle parameter to False). That's obviously a problem when trying to learn features to predict class labels. Then Perform the model training on the training set and use the test set for validation purpose, ideally split the data into 70:30 or 80:20.

If you were to split your dataset with 3 classes of equal numbers of instances as 2/3 for training and 1/3 for testing, your newly separated datasets would have zero label crossover. In this approach we randomly split the complete data into training and test sets. Others split the data into training and testing, then apply K cross-validation for the training set to build the model and for hyperparameter tunning and finally evaluate the model based on the. #HOW TO SPLIT DATA INTO TRAINING AND VALIDATION SAS JMP CODE#I'm pretty sure when I wrote this code I had borrowed a trick from another answer on here, but I couldn't find it to link to. You need to simulate a situation in a production environment, where after training a model you evaluate data coming after the time of creation of the model. The test set should be the most recent part of data.

For each observation, randomly assign it to one of the three roles. (A common variation uses only training and validation.) There are basically two approaches to partitioning data: Specify the proportion of observations that you want in each role. #HOW TO SPLIT DATA INTO TRAINING AND VALIDATION SAS JMP HOW TO#Train/validation/test in this order by time. Ive seen many questions about how to use SAS to split data into training, validation, and testing data. Probably not the best way, but here is one way to do it. You should use a split based on time to avoid the look-ahead bias.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed